Why can’t I utilize ALL my CPU cores?

I often find users under the misconception that they can find a way to put all those unused CPU cycles they see to work. I don’t mean at idle, I mean during CPU loads. Some think Process Lasso can do this for them, and I hate to tell them that it can’t.

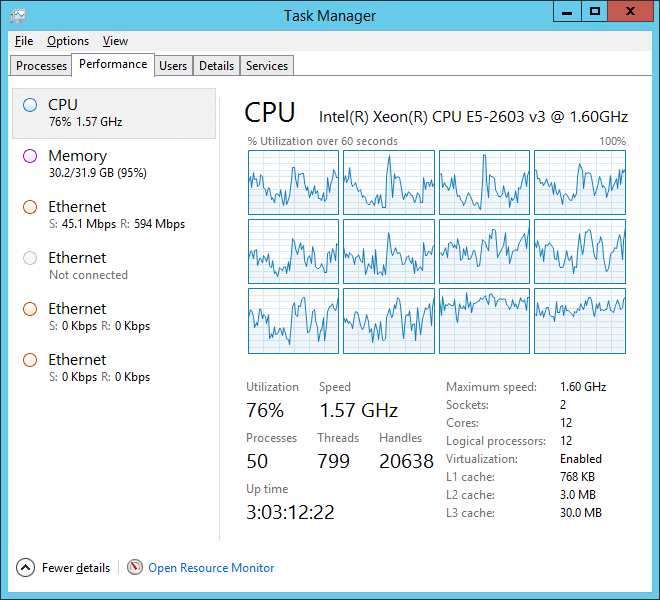

They are understandably frustrated that when they have a CPU heavy load, their CPU utilization never reaches 100%. What I mean by that is shown in the above graphic. They want those spare CPU cycles to be used. Why are they just sitting there idle when there is work to do? This post will attempt to answer that question in layman’s terms without being too cheesy.

The answer is that, like most analogies, those about CPUs are not accurate. They are not automotive engines. They don’t turn gasoline into energy, they execute code.

In doing their task of executing code, each piece of executing code is a ‘thread’. In Windows, a group of threads makes a process. So a thread is just a series of instructions ready or waiting to be executed.

… a thread can only be executed by one single CPU core.

Importantly, a thread can only be executed by one single CPU core. There is no way to magically break it up. A thread can be swapped between logical CPU cores, but that just leads to decreased performance due to the overhead of the context switch.

So since a thread can’t be split up, much like an atom before we split it, the efficiency with which logical CPU cores (processors) are utilized is limited by how multi-threaded the user load is (the running applications). You may say, “Why not write everything multi-threaded!”. Indeed, developers strive to use as much parallelism as possible. However, most tasks just can’t be broken up, as they require one operation to be performed before the next can start. You can’t ‘skip ahead’ before prior computations are done.

.. since a thread can’t be split up, much like an atom before we split it, the efficiency with which logical CPU cores (processors) are utilized is limited by how multi-threaded the user load is (the running applications).

So what decides which thread gets put on which logical core? Answer is The Windows OS CPU Scheduler Subsystem, a part of the system you’ll never directly see, is responsible for deciding what logical CPU core to put a thread on – and it’s pretty smart! It knows to try to balance the workload as best it can between the threads, but also not to swap them too often. It knows a hyper-threaded thread from a normal one (though that distinction matters less these days). In short, it shouldn’t be second-guessed unless you know what you are doing. Certainly there are cases where you know your intent, and it doesn’t, so a more optimal CPU core affinity could theoretically be set, and that is where utilities like Process Lasso comes in handy! (one of it’s many features is persistent CPU core affinities for processes).

All this is why per-core performance is still very important. It quit growing at the same rate a few years ago and the CPU makers went parallel (more cores). Pay attention not just to the core count, but to the individual core performance!

Tip: The extreme end of swapping a thread from processor to processor too frequently is called Core Thrashing.

Tip: In Linux, a process is more like a thread in Windows, though it can also have true threads. Instead, Linux forks processes much like Chrome forks itself in Windows. That is why there are so many instances of Chrome. Each instance shares virtual memory with other(s), so it is not as wasteful as it might seem. BTW, Chrome uses an internal ProBalance-like mechanism to maintain responsiveness when its background tabs are consuming resources.

Discover more from Bitsum

Subscribe to get the latest posts sent to your email.