To understand TurboCore and TurboBoost, you must first understand CPU Dynamic Frequency Scaling (CPU Throttling). Basically, the CPU frequency is scaled down when idle, then scaled back up when there is a load. Simple enough, and it makes sense.

To understand TurboCore and TurboBoost, you must first understand CPU Dynamic Frequency Scaling (CPU Throttling). Basically, the CPU frequency is scaled down when idle, then scaled back up when there is a load. Simple enough, and it makes sense.

This lowers power consumption and decreases thermal emissions when the CPU is idle.

In the last few years, CPUs can not only dynamically adjust the clock speed of the CPU as a whole, but also adjust the clock speeds of individual cores. This is where TurboBoost and TurboCore come in …

Pushing It To The Limit

CPU makers face a battle against heat and energy consumption. The less energy, the less heat. For each CPU you therefore define a set of frequencies at which it is able to safely operated at sustained high loads while staying within its nominal temperature and voltage without malfunctioning.

Of course, they use the stock cooler for this test, and we’ll leave the ‘better CPU heatsink’ market out of this.

(Side note on enhanced cooling)

From my experience, unless you go ‘extreme’, you won’t gain much – but if you do go ‘extreme’, you can gain a lot – e.g. liquid cooled).

AMD’s TurboCore or Intel’s TurboBoost

AMD’s Turbo Core (TurboCore) and Intel’s Turbo Boost (TurboBoost) are essentially the older, energy and heat conserving, frequency scaling in reverse. They increase the frequency of a select group of cores when only a few are in use. They can’t boost all cores to the higher frequency, else the CPU or thermal solution (e.g. Heatsink) would be overwhelmed.

These technologies may push individual cores, again when only a FEW are active, to as high as 150% of their base clock speed. That is a good boost, assuming you are only using a few of your cores. It matters most for primarily single threaded situations, where there isn’t excessive other activity also going on. There it can be a big boost in performance.

Advantages

The advantage of these technologies is that a few cores can dynamically operate at a higher frequency under certain circumstances. This means proportionally faster code execution for CPU bound threads. A CPU bound thread is essentially one where the CPU is the bottleneck.

Disadvantages

The largest disadvantage at this time is that the Windows CPU scheduler may not be aware of processors (cores) clocked at a higher frequency, with either technology. It therefore will haphazardly throw a thread’s next time slice onto which core it chooses. Due to core thrashing, it may make some attempt to keep that thread on that CPU, but the thread can be swapped around every time slice if the scheduler decides. Therefore, there is no guarantee that the active thread(s) will be executing on up-clocked processor(s).

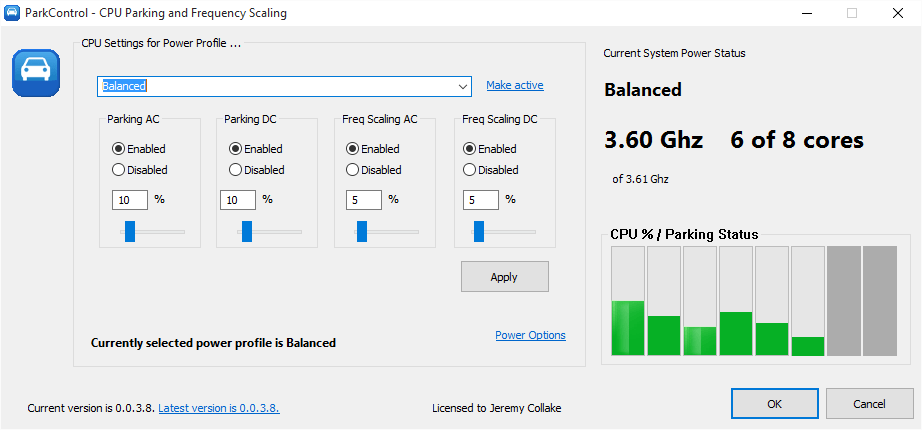

Optimizations

Therefore, using software like Process Lasso can help you to set default (sticky/permanent) CPU affinities on processes (applications) you know only use a maximum of X active threads. While an application may have 10 threads, it could be that only 1 does any real work, so do not let the total thread count confuse you. For those processes, you can be sure that their threads are limited to a subset of available cores, all of which can be overclocked by AMD’s TurboCore or Intel’s TurboBoost if properly configured (with not too many cores selected).

Warning to Overclockers

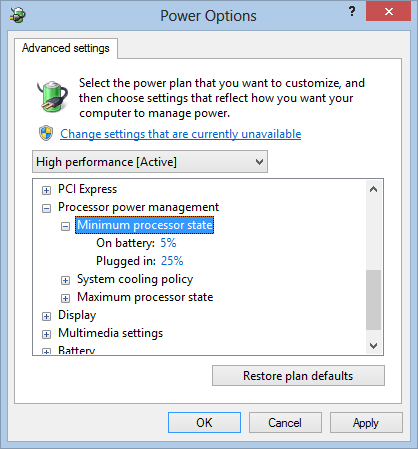

Since many enthusiast overclock their PC to achieve marginal performance gains, we should warn that you should turn off these frequency boosting technologies *before* doing any over-clocking, or at least be very careful to properly load test overclocked settings. It is generally better for overclockers to disable these frequency boosting technologies. Enabling this function would limit your range as stability tests would fail due to the up-clocked cores. Given that the Windows scheduler is not aware of these technologies, that is yet another reason to go farther by turning it off.

Make all cores execute at the maximum frequency, while achieving stability and safety. Be *sure* to run stability tests, as you’d be surprised as how well a PC can act, but have hidden surprises during high loads that will get you in trouble later down the road.

Somewhat related — As of Jan 2012 Microsoft has released an update for the Windows 7 and 2008/R2 scheduler that views the new AMD Bulldozer platform as having 1/2 real CPUs, and 1/2 ‘fake’ hyper-threaded CPUs. This helps to try to put the workload onto every other processor, an important thing for two reasons. One, TurboCore will be more likely to kick in. Two, the design of AMD Bulldozer is such that there are 2 pairs of cores that share an L2 cache, FPU, and other items. Thus, they are not fully independent, and you certainly want to back-fill utilization of them after the first core of each module is saturated. Don’t worry, if full computing power is needed, all cores get used — this just helps them perform better in ‘lightly threaded’ situations.